The King is Dead: How HappyHorse Dethroned Seedance 2.0 to Claim the #1

Avant

AvantIf your X feed or AI circles have been anything like mine over the last 48 hours, you’ve probably been hit with a wave of 'Happy Horse' content.

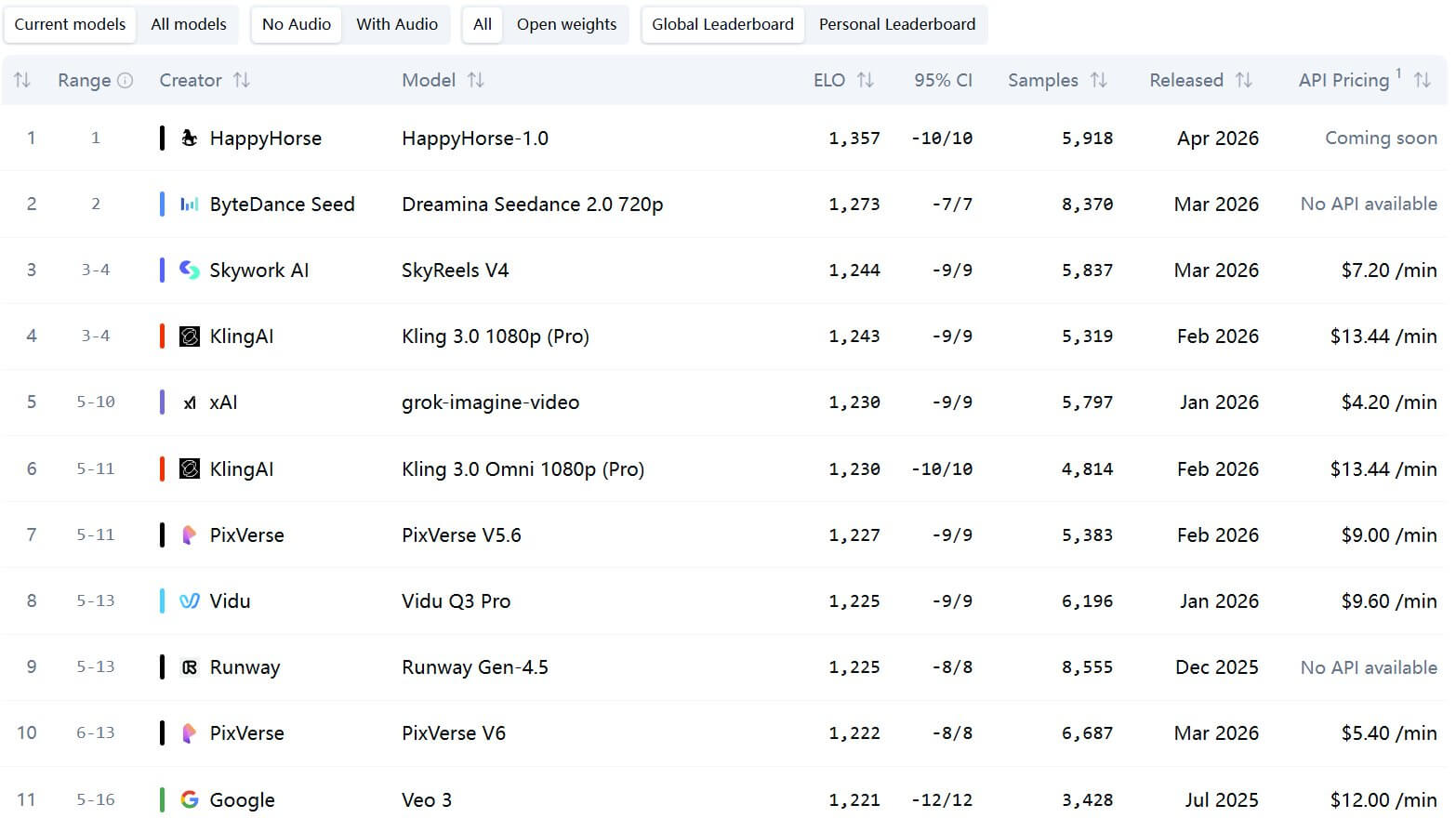

With no keynote or fanfare, HappyHorse just executed a clean sweep of the Artificial Analysis leaderboard, dethroning ByteDance’s Seedance 2.0 as the new #1 in both Text-to-Video and Image-to-Video. But this isn't just about a higher Elo score; it’s a total disruption of the "closed-source" status quo.

From its mysterious pedigree to its native multimodal architecture, here is why HappyHorse is the new gold standard for professional AI video.

In this article, you will learn:

The Pedigree—From "Mystery Model" to Identity Reveal

For the first 48 hours, the AI community was playing a high-stakes guessing game. Rumors flew, with many betting on a stealth Google Veo leak or a new Silicon Valley heavyweight.

The truth, once revealed, sent shockwaves through the industry: This isn’t a scrappy startup. It is the work of Alibaba’s "Future Living Lab" (TTG), led by the man who arguably holds the most formidable track record in video generation today: Zhang Di.

The Architect of Motion: Who is Zhang Di?

Zhang Di is not your typical technical lead. He is a heavyweight veteran with a "full circle" story:

The Academic Core: An alum of Shanghai Jiao Tong University (Bachelor’s & Master’s).

The Kling Era: As the former VP of Kuaishou and the No. 1 Lead of Kling AI, he was the architect behind the first model that proved China could match—and in some ways, surpass—Seedance’s capabilities.

The Alibaba Homecoming: Before his stint at Kuaishou, he headed the Big Data and ML Engineering Architecture at Alimama. Now back at Alibaba as a P11 leader, he reports directly to Zheng Bo (CTO of Alimama and Chief Scientist of TTG).

HappyHorse isn't an experiment. It’s the result of the industry’s elite "E-commerce + Visual AI + Multimodal" brain trust. It’s a model built not just to impress, but to dominate the production workflow.

The Seedance 2.0 Killer? HappyHorse Blind Test Comparison.

The rankings on Artificial Analysis’s Video Arena are far more than just marketing fluff or cherry-picked sizzle reels. This is raw social proof, built on a rigorous Elo-based system fueled by thousands of blind "A/B" tests from the community.

Text-to-Video: With an Elo of 1379, HappyHorse claimed the #1 spot, gapping the runner-up—ByteDance’s flagship Seedance 2.0—by a staggering 106 points. To put that in perspective, Seedance 2.0 has been the undisputed heavyweight champion of this list for months.

Image-to-Video: It swept the second category with an Elo of 1411, sitting 55 points clear of the field.

Note

Note

It’s important to understand the "Arena" logic: users vote on side-by-side clips without knowing which model is which. A high Elo doesn't just mean "better physics"—it means human-centric appeal. It’s a measure of what looks "right" to the human eye, prioritizing aesthetic intuition over raw synthetic data.

I spent the afternoon grinding through the Arena myself. After consistently "betting on the Horse" in head-to-head battles, the reality is undeniable: this isn't just a honeymoon phase—HappyHorse-1.0 is a legitimate powerhouse.

Reveal:

In the first matchup, the difference was night and day. On the right, we had a shot that looked like a high-budget film opening—nuanced lighting, rich textures, and sophisticated lens work. On the left? An aggressively over-saturated "blood-red sky" that felt cartoonish and forced. The winner on the right was HappyHorse.

Reveal:

"Press Conference" prompt—a classic test for multi-character consistency. HappyHorse (on the right) nailed it. The camera transitions from close-ups to wide shots were seamless, perfectly capturing the chaotic energy of a media scrum. Meanwhile, the contender on the left felt "off"—the character placement was awkward, and it lacked any sense of atmosphere.

Reveal:

While both models started with the same reference photo, the execution diverged quickly. The clip on the left rushed the camera zoom, creating a jarring effect, and the subject’s face had that "uncanny valley" smoothness—too perfect to be real. HappyHorse (on the right) kept the zoom steady and cinematic, while preserving the micro-textures of the skin (pores, wrinkles, and shadows).

The Verdict: A Statistical Anomaly?

Whenever HappyHorse was an option, I picked it nearly 90% of the time. This isn't just a "new model" bump. For a model to consistently beat established giants like Veo and specialty players like PixVerse in a blind setting, its underlying physics and aesthetic logic must be fundamentally superior. It’s no wonder it "air-dropped" straight to the #1 spot—the users have already spoken.

The Workflow Revolution—Why HappyHorse Wins

Let’s cut through the noise. HappyHorse isn't just another model; it is a production-grade engine designed to solve the three biggest pain points in AI filmmaking:

Audio Sync, Localization, and Control.

Native Multimodal: The End of "Silent Film"

The days of generating a silent clip and wrestling with third-party audio tools are over. HappyHorse utilizes a unified architecture that generates video and high-fidelity audio simultaneously.

Global Lip-Sync: 7 Languages, Zero Friction

HappyHorse natively supports 7 languages (English, Chinese, Japanese, Korean, French, German, and Spanish). Unlike competitors that simply "overlay" mouth movements, this model adapts the actual physics of the lips to the phonetics of each language. Localized content finally looks authentic, not like a bad dub.

The Industry Disruptor: Open Sovereignty

If the current industry rumors and leaked GitHub repositories hold true, HappyHorse-1.0 is positioned for a potentially massive April 10th debut with a focus on Total Transparency. Unlike its rivals, the buzz suggests a model built for the community:

The Open-Source Edge

- Run it Anywhere: Freedom to execute on your own local hardware.

- Zero "Credit Fatigue": No more metered usage or expensive tokens for every retake.

- Privacy First: Fine-tune the model with your own IP without it ever leaving your server.

2026 Heavyweight Showdown: How They Stack Up

| Feature | HappyHorse 1.0 | Seedance 2.0 | Kling 3.0 (Omni) | Google Veo 3.1 |

|---|---|---|---|---|

| Access Model | Open Weights (Apr 10) | Closed / Web & API | Closed / Pro Subscription | Closed / Vertex AI Beta |

| Audio Architecture | Native Multimodal | Unified (Post-Sync) | Native Omni-Sync | Native Unified |

| Max Resolution | 1080p Cinematic | 4K | 1080p | 1080p |

| Lip-Sync Support | 7 Languages | Standard | Global (Omni-model) | Standard |

| Motion Physics | High / Cinematic Logic | Extreme / Dynamic | Ultra-Fluid / Realistic | Stable / Consistent |

Quick Start Guide—How to Ride the "Happy Horse"

With the official GitHub repository currently displaying a "Coming Soon" placeholder, the AI community is circling April 10th as the potential "moment of truth." While we wait for the final code push, here is how you can position yourself to ride the wave without the technical friction.

1 The Local Route: For the Hardcore Devs

Once the weights drop on GitHub/HuggingFace, be ready for significant hardware demands.

Requirements: A minimum of 24GB VRAM (RTX 4090) for inference; an NVIDIA H100 for production-grade speed.

Setup: You'll need a Python 3.10 environment and the latest CUDA drivers.

2 The Production Route: ChatArt (Recommended)

If you don't have a high-end GPU cluster, the most efficient way to use HappyHorse is through ChatArt.

ChatArt has already optimized its infrastructure to support the next generation of video models. Instead of wrestling with local dependencies, you can leverage their cloud power to:

ChatArt - Your all-in-one AI creative assistant.

5,323,556 users have tried it for free

- All-in-One Model Hub: Access a curated powerhouse of the world’s leading AI engines—including HappyHorse 1.0, Seedance 2.0, and Kling.

- Pro-Grade Video Templates: Create high-end AI MVs and social media ads instantly using a vast library of pro-grade visual presets.

- 100+ Creative Assistants: Go beyond simple chatting with dedicated AI assistants for every creative niche, including scriptwriting, and high-fidelity image composition.

- Hardware-Free Rendering: Skip the expensive GPUs. Render 1080p cinematic video and native lip-sync directly in your browser.

- Unified Multimodal Suite: Seamlessly bridge the gap between text, image, and video. Generate a story, design the characters, and render the final cinematic masterpiece all within one fluid ecosystem.

FAQ: Everything You Need to Know About HappyHorse

1 What is HappyHorse exactly?

It’s a state-of-the-art AI video model from Alibaba’s Future Living Lab. It currently holds the #1 spot on the Artificial Analysis Video Arena, dominating both Text-to-Video (T2V) and Image-to-Video (I2V) benchmarks.

2 Is it really better than Veo or Seedance 2.0?

In terms of human preference (blind tests), yes. It currently leads in visual fidelity and "cinematic feel."

3 Which languages are supported for Lip-Sync?

It natively supports 7 languages: English, Chinese, Japanese, Korean, French, German, and Spanish. It doesn't just "dub"; it adapts the actual physics of the mouth to match the phonetic nuances of each language.

4 When and where can I download it?

The model is scheduled for open-source release on April 10, 2026. You will be able to find the weights on GitHub.

5 I don't have a high-end GPU. Can I still use it?

Absolutely. If you want to skip the technical setup and hardware costs, the easiest way to access HappyHorse and other top-tier models (like Seedance 2.0) is through ChatArt.

Conclusion

The emergence of HappyHorse marks the end of the "Seedance-style" monopoly on high-end video, shifting the power back to creators through the perfect marriage of native multimodal generation and an open-source philosophy. Whether you are a developer seeking total local control or a director leveraging the frictionless cloud power of ChatArt, the launch on April 10 signals a new reality: the bottleneck is no longer expensive hardware or closed algorithms—it’s simply your imagination.

Vidu AI – Features, Pricing, and the Best Free Way to Use It in 2026

PixVerse AI Review: Features, Safety, Pricing & Free Usage

Top 6 Free AI Kissing Video Generators in 2026